Introduction

In this article we will talk about one of the hot tools in the topic of continuous integration and deployment processes “CICD” in Kubernetes, ArgoCD. In recent months, many leading companies in the Internet sector have publicly declared the use of ArgoCD to deploy applications in their clusters. You can see a list here.

To begin with, let’s review what ArgoCD is for and how far ArgoCD’s functionalities go. Then, we will see a typical use case of application deployment using ArgoCD and the advantages of its implementation. Finally, we will comment on the conclusions we have drawn in terms of pros and cons, and we will analyze what other tools complement ArgoCD to further optimize the process of integration and continuous deployment of applications.

What is ArgoCD?

ArgoCD is a tool that allows us adopt GitOps methodologies for continuous deployment of applications in Kubernetes clusters.

The main feature is that ArgoCD synchronizes the state of the deployed applications with their respective manifests declared in git. This allows developers to deploy new versions of the application by simply modifying the git content, either with commits to development branches or by modifying main branch.

Once the code has been modified in git, ArgoCD detects, via webhook or periodic checks every few minutes, that there have been changes in the application manifests. It then compares the manifests declared in git with those applied in the clusters and updates the latter until they are synchronised.

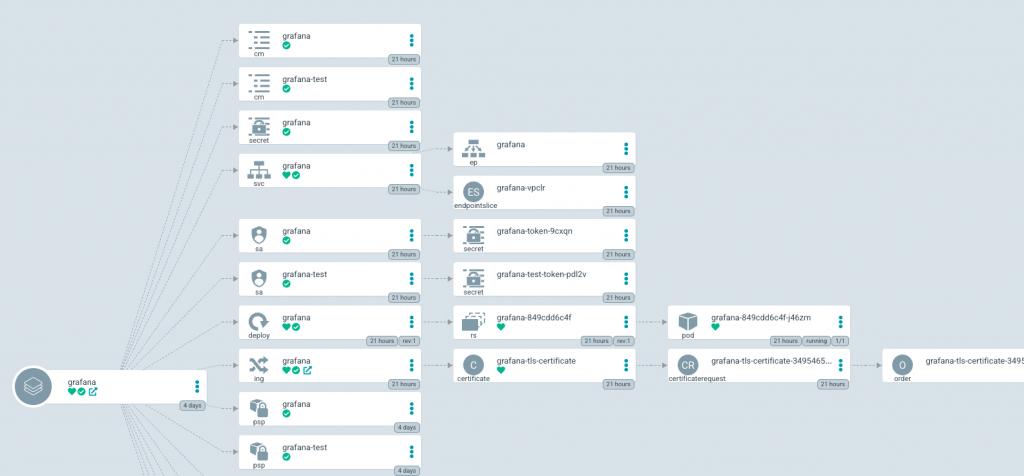

Its user-friendly user interface allows us to visualize very well the content, structure and state of the clusters as well as manipulate resources.

Can ArgoCD automate the entire CI/CD process of an application?

No, ArgoCD takes care of deploying the application once the artifact already exists in a container registry, such as Dockerhub or ECR. This implies that previously the application code has already been tested and containerised in an image. At the end of this article we will talk about what options currently exist to accomplish this previous task in an automated gitops way.

As we have already explained, ArgoCD synchronizes the state of deployed applications with their respective manifests declared in git. But it does not refer to the git repository of the application code itself, but to a separate repository, as best practices suggest, that contains the application’s kubernetes infrastructure code, which can be in the form of helm charts, kustomize application, ksonnet…

To better explain the main benefits offered by ArgoCD let’s see an use case example.

Using ArgoCD

In this example we will see how ArgoCD can deploy either applications developed by third parties, which have their own helm chart maintained by another organization, or one of our own where we have defined the chart ourselves.

For the example we will deploy a monitoring stack consisting of Prometheus, Grafana and Thanos using their helm charts.

ArgoCD deploys the applications through a custom object called Application. This object has as attributes a source and a destination. The source can read several formats, in this exaple our application object will read and deploy helm charts from a chart repository, and charts from a git repository. The destination is the cluster to which the content of the source will be deployed. In the application configuration we can enable that ArgoCD automatically keeps the state of the deployed kubernetes objects synchronized with the configuration indicated in the source (charts/git). This option is very interesting because it ensures that ArgoCD is going to be aware every few minutes that everything is still in sync, by contrast, deploying applications directly with helm commands only ensure synchronization at the time of deployment.

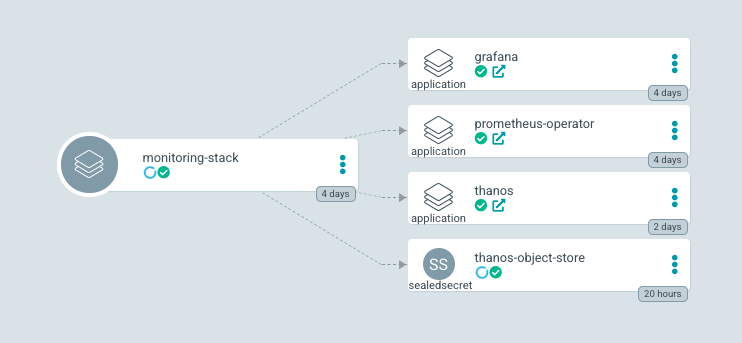

Now that we have explained what the Application object is, for our monitoring-stack, we are going to create four. Why four applications if there will only be 3 services in the stack? (Prometheus, Grafana and Thanos).

ArgoCD also offers the possibility of creating groups of applications that follow the “app of apps pattern” concept. This is an ArgoCD application that deploys other applications and so on recursively. In the case of our monitoring stack, we are going to create a fourth application that will deploy the other 3 applications, the parent application will be called “monitoring-stack”.

To create an application we can define an ArgoCD Application manifest, as indicated in this page of the documentation. We can also do it by command line. But ArgoCD has a great UI that allows you to create applications manually as well, you can see how here.

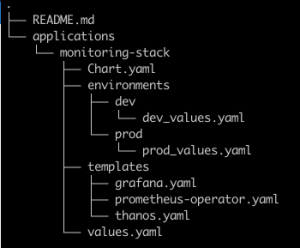

The “monitoring-stack” application will point its source to a git repository with a Helm chart. This chart will contain the manifests of the other three applications in the “templates” directory in yaml format. These files are Application object definitions that point to the relevant official Helm chart of each service. Using the “values files”, we will be able to deploy different versions in different environments.

Once the templates of the “monitoring-stack” chart have been defined, we will create the parent ArgoCD Application, and in source we will point to the previously mentioned repository. ArgoCD will detect that it is a helm chart and we can indicate the path of the specific values file, for example “prod_values.yaml”.

At the end of the manual configuration of the application, in the user interface we will see how all the created objects are represented, organized in a hierarchical way.

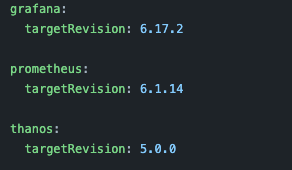

Since the applications are synchronized with our repository, and the charts are parameterized with templates and values. To deploy a new version of any of our applications we will only have to modify the values file through git commits.

ArgoCD will detect the changes in the repository and apply them in the kubernetes cluster through a rolling update deployment.

As a note, using ArgoCD Image Updater can save us from doing this last step manually, or even having to develop a complex pipeline to update the values.yaml file in git when we want to deploy the new image.

This tool periodically queries the latest tags in our image repository looking for new artifacts to deploy. This way, once it has found a new one, it takes care of automating the deployment process by editing the git configuration with the name of the new tag.

It is worth mentioning that there is not yet a stable version of ArgoCD Image Updater but it is expected soon.

In this example we have created an application that points to a repository that creates applications which point to official helm charts, but this hierarchical loop can be extended much further, following the “app of apps pattern”.

Another interesting feature of ArgoCD is that it allows us to deploy applications in different clusters. There are several ways to do this, but the most direct way is through the Application Set resource.

In its manifest we can specify a list of clusters where to deploy simultaneously with different paths of the repository. Since in our repository we can specify different versions for each cluster.

The relative ease of installation that ArgoCD has is another positive point to take into account, here you can consult the steps.

Automation of the entire CICD process with Argo tools

If we want to go a step further and automate the entire CICD process in Kubernetes, we can complement ArgoCD with the rest of the tools presented by the Argo project.

By combining Argo Events, Argo Workflows, ArgoCD and Argo Rollouts, further automation is possible following best practices in the current continuous integration standards.

Victor Farcic explains it very coherently in this video.

As a solution to the added complexity of installing and managing all these Argo project tools, some applications that encompass this entire stack have already been released allowing us to configure the pipelines for integration and deployment from a simplified higher level layer. Below we mention a couple of them, although in this post we are not going to analyze the particular functionalities.

Devtron is an open source tool that installs underneath this Argo stack and other tools and promises that it will let us automate the entire CICD process completely from the user interface. Devtron simplifies the configuration quite a lot as we interact with the internal tools from a high level layer, without manually installing any of them. Although after testing it, we do not believe that the tool is mature enough to be implemented in a production environment for the time being.

Similar to Devtron’s approach, Codefresh also uses all of the Argo stack to automate all integration and deployment. But apart from the fact that the tool is still in early-access, a big difference is that access will be in SaaS format. As we can see in the pricing section, the full automation option will be paid and the price is not mentioned on the website.

Conclusions

ArgoCD is a very useful tool to automate the deployment process using GitOps best practices. Thanks to its implementation, developers can test new versions of applications more quickly and deploy to production safely once testing is complete. In addition, thanks to the auto-sync feature and its beautiful interface, ArgoCD allows us to keep track of the status of applications and their resources deployed in Kubernetes at all times. Combined with the other tools of the Argo project we can automate the entire CICD process (and many other utilities outside the scope of this post) following good practices for current standards.

On the downside, using ArgoCD will introduce an extra layer of complexity to our configuration, as it has many different options, introduces custom objects and concepts we are not familiar with yet. It can be “overkill” if we have a very small cluster with only a handful of applications.