Introduction

We are all familiar with the “big three” offer in managed Kubernetes: EKS, GKE and AKS.

But today, apart from the former and a few more who want to play in the same league (Oracle or IBM, for example), there are other less well-known providers that, unable to compete with the service catalog, try to make a name for themselves by offering simplicity and lower prices. One of the benefits of Kubernetes “explosion” is that with it and little more (a load balancer, some block and object storage and a managed database) you can go very far before needing what the big ones offer.

So, when we talk about “simple and cheap” Kubernetes, the formula is to offer the “managed Kubernetes” service (the master nodes are hosted and managed by the provider) without charging for it. And this is exactly what alternatives such as Scaleway, Linode, OVH or DigitalOcean provide.

Testing a managed cluster

At Geko Cloud we are developing a product based on Kubernetes that you will soon hear more about, so we decided to use it to test one of the services on the list (in this case, Digital Ocean Kubernetes Service).

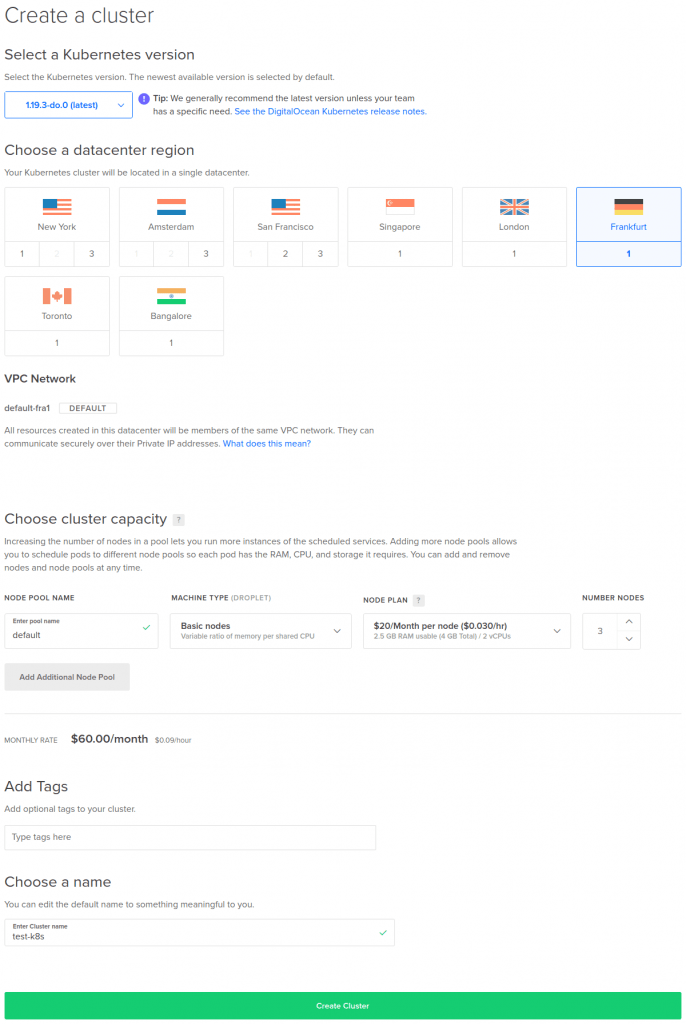

Creating a cluster is very simple: just select the version, the region, the type of node for the pool and give it a name:

In our case, since this was a test, we chose to create basic droplets and rely on the cluster autoscaler in case the application would need more resources.

When the cluster was ready (the process can take about 10-15 minutes), we only had to download the kubeconfig file and launch the application installation process. And then the problems began.

Observed problems

After a while, the API server became unresponsive, responding very slowly or returning several errors. Symptoms:

- Very high response times, sometimes even more than a minute

bash-5.0# time kubectl get pods -A NAMESPACE NAME READY STATUS RESTARTS AGE istio-system istio-init-crd-10-1.4.8-86xf9 1/1 Running 1 7m1s … real 1m14.507s user 0m0.174s sys 0m0.028s

- Connection errors

bash-5.0# kubectl get pods -A Unable to connect to the server: unexpected EOF

- Timeouts in the TLS handshake

bash-5.0# kubectl get pods -A Unable to connect to the server: net/http: TLS handshake timeout

The problem had to be related with the installation because, before that, everything worked perfectly.

DigitalOcean, like the other providers, does not offer any information about the resources dedicated to the master nodes (number of nodes, node size…) nor access to the control plane logs or monitoring, so there was no way to know what was happening.

A quick search made us realize that we were not alone with this kind of problems, although we also saw that many others were happy users of the service.

Solution

This made us suspect that not all master nodes were created equally, and that the only parameter in the creation process that could make the difference was the configured node pool.

In order to verify our theory, we first tried to create a cluster with a node pool with more nodes (from 3 to 6), with identical results.

So we tried to create a node pool with non-basic nodes (CPU-Optimized in this case). And there we hit the nail on the head: the installation process finished smoothly and the API server responded properly all the time.

Conclusion

It is obvious that nobody gives anything away and that you cannot expect to have a dedicated master node with 16 cores and 64 Gb of RAM paying 20 euros a month for a basic droplet. Anyway, it wouldn’t hurt if they were a little more transparent and informed about the capabilities and limitations of the service they are offering (even if it is free). In this way, more than one would avoid some headaches and frustrations.

So if you are planning to use this service to host an application that is going to make some intensive use of the API server, you’d better opt for non-basic droplets if you don’t want to have performance problems.

And remember that if you need anything we will be happy to listen to you, and you can also check our blog to find other useful posts like this one!